# Automatic Speech Recognition

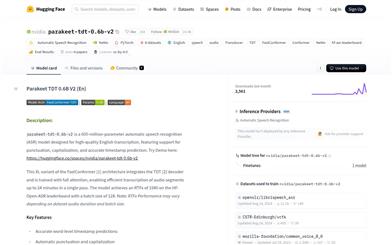

Parakeet Tdt 0.6b V2

parakeet-tdt-0.6b-v2 is a 600 million parameter automatic speech recognition (ASR) model designed to achieve high-quality English transcription with accurate timestamp prediction and automatic punctuation and capitalization support. The model is based on the FastConformer architecture, capable of efficiently processing audio clips up to 24 minutes long, making it suitable for developers, researchers, and various industry applications.

Speech Recognition

38.6K

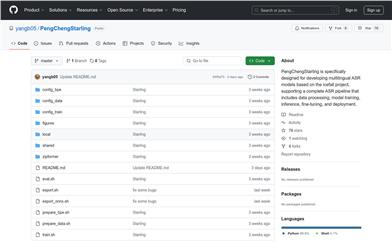

Pengchengstarling

PengChengStarling is an open-source toolkit focused on multilingual automatic speech recognition (ASR), developed based on the icefall project. It supports the entire ASR process, including data processing, model training, inference, fine-tuning, and deployment. By optimizing parameter configurations and integrating language identifiers into the RNN-Transducer architecture, it significantly enhances the performance of multilingual ASR systems. Its main advantages include efficient multilingual support, a flexible configuration design, and robust inference performance. The models in PengChengStarling perform exceptionally well across various languages, require relatively small model sizes, and offer extremely fast inference speeds, making it suitable for scenarios that demand efficient speech recognition.

Speech Recognition

49.7K

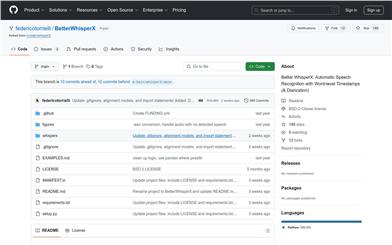

Betterwhisperx

BetterWhisperX is an improved automatic speech recognition model based on WhisperX, offering fast speech-to-text services with word-level timestamps and speaker identification features. This tool is vital for researchers and developers handling large volumes of audio data, as it significantly enhances the efficiency and accuracy of speech data processing. The product is built on OpenAI's Whisper model with further optimizations and improvements. Currently, the project is free and open-source, aiming to provide the developer community with more efficient and accurate speech recognition tools.

Speech Recognition

67.1K

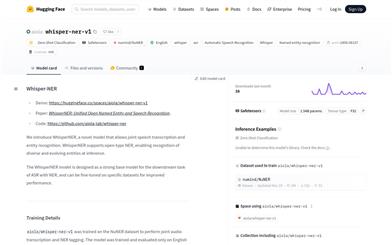

Whisper Ner V1

Whisper-NER is an innovative model that allows for simultaneous speech transcription and entity recognition. This model supports open-type Named Entity Recognition (NER) and can identify a diverse and evolving set of entities. Whisper-NER is designed as a robust foundational model for automatic speech recognition (ASR) and NER downstream tasks and can be fine-tuned on specific datasets to enhance performance.

Entity Recognition

50.0K

Whisperner

WhisperNER is a unified model that combines Automatic Speech Recognition (ASR) and Named Entity Recognition (NER), equipped with zero-shot capabilities. This model is designed as a robust foundational model for downstream tasks of ASR with NER and can be fine-tuned on specific datasets to enhance performance. The significance of WhisperNER lies in its ability to simultaneously handle speech recognition and entity recognition tasks, improving efficiency and accuracy, especially in multilingual and cross-domain scenarios.

Named Entity Recognition

50.2K

Moonshine

Moonshine is a suite of speech-to-text models optimized for resource-constrained devices, making it ideal for real-time, on-device applications such as live transcription and voice command recognition. It outperforms the OpenAI Whisper model of the same size in word error rate (WER) on test datasets used in the OpenASR leaderboard maintained by HuggingFace. Additionally, Moonshine's computational requirements vary with the length of the input audio, allowing for quicker processing of shorter audio compared to the Whisper model, which processes everything in 30-second chunks. Moonshine processes 10-second audio segments at a speed five times faster than Whisper while maintaining the same or better WER.

Speech Recognition

55.8K

Crisperwhisper

CrisperWhisper is an advanced variant of OpenAI's Whisper model, specifically designed for fast, accurate, verbatim speech recognition, providing precise word-level timestamps. Unlike the original Whisper model, CrisperWhisper aims to transcribe every spoken word, including filler words, pauses, stutters, and false starts. This model ranks first in word-level datasets such as TED and AMI, and has been accepted at INTERSPEECH 2024.

AI speech recognition

53.8K

Seed Tts Eval

seed-tts-eval is a testing dataset for evaluating a model's zero-shot speech generation capability. It provides an objective evaluation test set across diverse domains, containing samples extracted from both English and Mandarin public language repositories. This dataset is used to measure the model's performance across various objective metrics. It utilizes 1000 samples from the Common Voice dataset and 2000 samples from the DiDiSpeech-2 dataset.

AI Model

128.6K

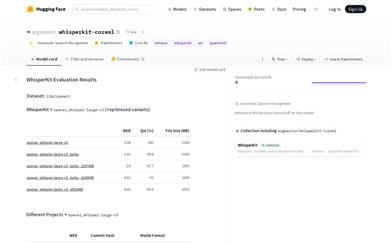

Whisperkit

WhisperKit is a tool for compressing and optimizing automatic speech recognition (ASR) models. It allows for model compression and optimization, providing detailed performance evaluation data. WhisperKit also offers quality assurance certifications for different datasets and model formats, and supports local reproducibility of test results.

AI speech recognition

116.7K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

42.2K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.0K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.1K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

41.7K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.2K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.4K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M